You're probably living the same contradiction every SaaS leader faces. The board wants faster delivery. Customers expect a polished product. Security can't be an afterthought. Engineering wants space to build it properly. Everyone says “move fast”, right up until a release breaks a critical flow or an auditor starts asking awkward questions.

That tension is where white-box and black-box decisions stop being test jargon and become business strategy. One approach gives you visibility into what's happening inside the product. The other tells you how it behaves from the outside, where users, attackers, and regulators interact with it. If you treat that choice as a technical footnote, you'll waste time, miss risk, and make delivery less predictable than it needs to be.

The teams that win don't argue in absolutes. They decide where they need speed, where they need certainty, and where they need both.

The Modern SaaS Delivery Dilemma

A founder pushes for an MVP in the next quarter. A product manager wants cleaner onboarding and fewer support tickets. The CTO knows the current architecture has weak spots. Security wants more rigour. Finance wants cost control. None of these people are wrong. They're just optimising for different outcomes.

That's the delivery dilemma. You're not choosing between “good engineering” and “fast engineering”. You're choosing how to apply effort so the business gets the right result at the right moment.

Two ways to look at risk

White-box thinking looks inside the system. It asks what the code is doing, where the logic branches are, how trust boundaries work, and whether the implementation itself is safe and sound.

Black-box thinking looks at the product from the outside. It asks whether the checkout works, whether authentication can be bypassed, whether the API responds correctly, and whether the user journey behaves as promised.

Both mindsets matter because SaaS products fail in both places. Some failures come from hidden logic buried in the code. Others appear only when a user, an integration, or an attacker interacts with the live system.

| Approach | Core question | Best business use | Typical strength | Typical weakness |

|---|---|---|---|---|

| White-box | What's happening inside the system? | Critical logic, internal quality, code-level assurance | Deep insight and precise control | More effort and higher maintenance |

| Black-box | What happens when someone uses the product? | MVP validation, user flows, integration confidence | Fast feedback and business relevance | Limited visibility into root causes |

| Combined | Are we safe and correct both inside and out? | Mature SaaS delivery, regulated features, investor-ready platforms | Broader confidence across code and behaviour | Requires deliberate orchestration |

What leaders get wrong

The mistake isn't picking one approach first. The mistake is turning that first choice into a dogma.

If you over-index on black-box only, you can ship quickly but stay blind to fragile internals. If you over-index on white-box only, you can create a beautifully instrumented test estate that slows delivery and still misses what happens in production.

Practical rule: Tie your testing and validation strategy to the business risk of the feature, not the preference of the loudest person in the room.

That's the operating discipline behind predictable delivery. It's also how teams earn trust from customers, investors, and internal stakeholders without dragging every release through unnecessary process.

Unpacking the Core Concepts

White-box and black-box sound abstract until you anchor them in real product decisions. The easiest way to think about them is this. White-box is a glass engine. You can inspect every component. Black-box is a sealed unit. You judge it by what goes in, what comes out, and how it behaves under pressure.

In quality assurance

In QA, white-box testing means working with knowledge of the code, structure, branches, and internal logic. Engineers use that visibility to target specific paths and implementation details. This often involves unit testing, structural coverage, and logic-focused validation.

Black-box testing ignores the implementation and checks behaviour. Does the registration flow work? Does the API return the right result? Can a user complete the journey without errors? This is closer to how customers experience the product.

The important point is that the performance gap between the two is often smaller than teams assume. A UCL comparative study on test prioritisation found at most a 4% variance in fault detection rates, with 60% overlap in faults detected early on. That should reset how you think about “superior” testing. In many cases, the argument isn't about one method crushing the other. It's about where each method fits best.

In application security

Security teams use the same split, but the consequences are sharper.

White-box security work includes static analysis, code review, and threat modelling. It exposes weak logic, unsafe trust boundaries, and risky implementation choices before they become public incidents.

Black-box security work simulates the outside world. It tests what an attacker can exploit through the running application, including issues that only appear during execution or through real request flows.

If your team only tests what it can see in the code, it can still miss what breaks in production.

In AI model transparency

The same pattern now matters in AI-enabled products.

A white-box model is interpretable by design. You can inspect why it made a recommendation or risk decision. A black-box model may deliver excellent predictive performance, but its reasoning is harder to explain.

That distinction matters when a product team rolls out automated lending decisions, fraud scoring, or recommendations that face customer scrutiny. If you can't explain an output, you may still have a functioning model, but you don't have a fully usable product in a regulated environment.

The practical translation

Leaders don't need to memorise testing terminology. They need to ask three questions:

- For quality: Do we need confidence in internal logic, external behaviour, or both?

- For security: Are we proving code safety, real exploitability, or both?

- For AI: Are we optimising for raw predictive power, explainability, or both?

That's the difference between managing engineering activity and directing delivery outcomes.

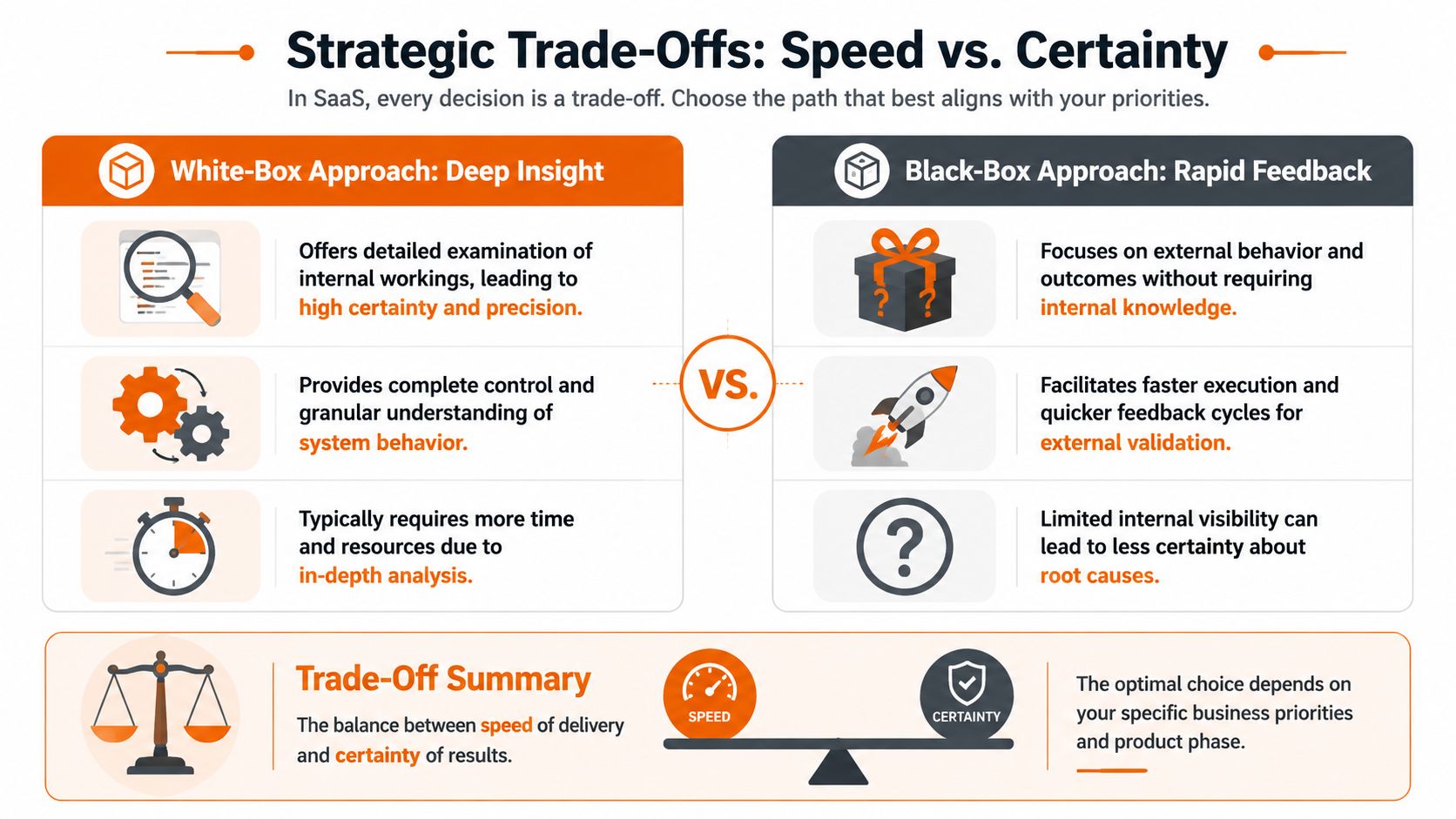

The Strategic Trade-Offs Speed vs Certainty

Every delivery strategy is a trade. Anyone who says otherwise is selling fantasy. White-box and black-box approaches make different bargains with your time, budget, and risk tolerance.

Where white-box wins

White-box work earns its keep when internal correctness matters more than convenience. Payment routing, pricing logic, permissions, and transaction handling all fall into that category. If the feature sits close to revenue, compliance, or trust, deep inspection is usually the right call.

A 2022 study on UK fintech platforms found that white-box test suites achieved 92-96% code coverage and uncovered 30-40% more logic defects, but required 25-35% more engineering time to create and maintain. That's the trade in plain English. You buy certainty with effort.

Where black-box wins

Black-box work is usually better when the business needs fast market feedback, stable regression checks around user journeys, and less brittleness during refactoring. For MVPs, integration surfaces, and end-to-end validation, it often gives the best return on speed.

This is especially useful when product teams are still discovering the shape of the solution. If the implementation is going to change repeatedly, tying too much of your assurance layer to internals can become self-inflicted drag.

What smart leaders optimise for

Don't ask which method is better. Ask which cost is more dangerous right now.

| Business priority | White-box gives you | Black-box gives you |

|---|---|---|

| Release confidence | Confidence in internal logic and structural integrity | Confidence in user-visible behaviour |

| Speed to market | Slower setup, stronger control in critical paths | Faster feedback on features and journeys |

| Maintenance load | Higher upkeep when implementation changes | Lower coupling to internals |

| Audit and compliance pressure | Better support for proving internal rigour | Better support for validating external outcomes |

A capable delivery organisation treats this as an operating model, not a one-off choice. Process maturity matters here. If your team struggles to turn risk into repeatable practice, a framework like the CMMI maturity model in software delivery can help leaders spot where quality decisions are ad hoc instead of systematic.

Board-level view: White-box is insurance for critical internals. Black-box is acceleration for visible outcomes. Good delivery leadership knows when to buy one, and when to buy both.

The wrong move is using white-box everywhere and slowing the business down. The other wrong move is relying on black-box everywhere and hoping hidden defects stay hidden. Mature SaaS delivery sits in the middle, with intent.

A Unified Approach for Total Product Quality

The strongest SaaS teams stop treating white-box and black-box as rivals. They use them together because each one catches what the other can't.

That's not theory. It's the practical response to how modern products fail. Security flaws, brittle integrations, broken workflows, and hidden logic issues don't show up through one lens alone.

Why single-method thinking breaks down

Relying on one testing methodology creates blind spots. In security, that's dangerous. Application security guidance comparing white-box and black-box security testing shows that white-box testing can miss runtime flaws like broken authentication, while black-box testing can't see flawed internal logic. If you only run one side of that equation, you're accepting uncertainty where you don't need to.

That's why the right strategy is integrated by design.

What a unified workflow looks like

A strong delivery workflow usually looks like this:

- Start inside the code: use SAST, code review, and threat modelling to catch unsafe logic, weak boundaries, and implementation risks early.

- Validate from the outside: run targeted dynamic checks against the running application to confirm whether those risks are exploitable.

- Tie findings together: if static analysis flags risky deserialisation or access-control logic, test the live behaviour directly.

- Feed the result back into delivery: prioritise fixes based on business exposure, not just severity labels.

That pattern creates a more honest quality signal. It doesn't just tell you what might be wrong. It tells you what matters.

Why this matters beyond security

The same principle applies to performance, resilience, and operational quality. Internal analysis might tell you a service is fragile. External validation shows whether customers feel that fragility. You need both perspectives if you want confidence that survives contact with production.

This is also where non-functional requirements stop being a vague appendix and become operational guardrails. If your team isn't explicit about latency, reliability, recoverability, and security expectations, your white-box and black-box efforts will drift apart. A practical primer on non-functional requirements in software delivery is useful because it forces teams to define what “good enough” means.

One-team mindset: Don't separate “code quality” from “product quality”. Customers experience the result, not your internal org chart.

A unified approach doesn't mean testing everything all the time. It means choosing the right combination, then owning the outcome end to end.

Putting It Into Practice Rite NRG Workflows

Strategy matters only if it changes delivery behaviour. Here's how I'd apply white-box and black-box thinking in three common SaaS situations.

Scenario one, a VC-backed MVP

A startup needs to prove demand fast. The product isn't stable yet, the feature set is moving, and the biggest risk is building the wrong thing too slowly.

In that case, I'd lean heavily toward black-box validation. Focus on onboarding, activation, payments, and the core user journey. Hit the API from the outside. Test the UI the way a customer would. Keep the test layer close to business outcomes so the team can refactor internals without rebuilding the entire safety net every sprint.

I'd still use targeted white-box checks for obviously risky logic, but I wouldn't let internal perfection delay market proof. Early-stage companies need learning velocity.

Scenario two, a fintech team shipping payments

Now the stakes change. A scale-up adds a payments module, touches transaction services, and knows auditors or enterprise buyers will ask harder questions.

Here, I'd shift to a white-box-dominant strategy around critical transaction paths. The goal is deeper certainty on logic, validation rules, state transitions, and failure handling. User-journey tests still matter, but they aren't enough on their own because the business risk sits inside the implementation as much as on the surface.

Leaders need discipline. Don't blanket the whole platform with maximum rigour. Put it where money movement, regulatory exposure, and customer trust are concentrated.

Scenario three, AI in a regulated product

A BNPL platform adds an AI-driven risk feature. Product wants strong predictive performance. Compliance wants explanations that stand up to scrutiny. Support wants quick answers when customers challenge decisions.

The white-box and black-box conversation expands beyond testing and into model choice. A 2024 UK analysis of interpretable and black-box AI models showed that white-box models like EBMs could match black-box performance closely in regulated sectors while providing regulator-ready explanations 50% faster. That matters because explanation speed isn't cosmetic. It affects disputes, audits, and operational flow.

The operating pattern behind all three

The workflow changes with the business context, but the delivery posture stays the same:

- Define the business risk first. Revenue risk, compliance risk, user trust risk, or discovery risk.

- Pick the dominant lens. Black-box for fast external validation. White-box for internal certainty in critical logic.

- Add the complementary lens where blind spots remain. Don't pretend one method covers everything.

- Review the strategy as the product matures. What made sense at MVP rarely fits a mature platform.

That's how senior teams avoid cargo-cult engineering. They don't worship a method. They use the method that serves the business outcome.

Your Decision Framework Choosing the Right Mix

Another abstract debate is unnecessary. What's needed is a simple way to decide where to lean. Use this matrix when you're planning a feature, revisiting your QA strategy, or pressure-testing a release approach.

Decision matrix

| Factor | Lean Towards Black-Box When… | Lean Towards White-Box When… |

|---|---|---|

| Product maturity | You're proving an MVP, changing direction quickly, or refining workflows through user feedback | The product is stable, core logic is established, and failures would be expensive |

| Feature criticality | The feature supports convenience, engagement, or non-critical workflows | The feature affects payments, permissions, pricing, or other trust-sensitive logic |

| Regulatory pressure | External behaviour and user outcomes are the main concern | You must show internal rigour, traceability, or explainability |

| Architecture volatility | The implementation is likely to change often | The internal design needs close control and deliberate verification |

| Team capability | You need broader participation around business flows and acceptance criteria | You have engineers who can inspect code paths, boundaries, and internal risks confidently |

| Root-cause visibility | Fast signal matters more than deep diagnosis | Precise fault isolation matters because the cost of missing logic flaws is high |

| AI feature design | Predictive output is the primary concern | Explainability and defensibility are part of the product requirement |

The questions worth asking in planning

Use these in release planning or roadmap reviews:

- What hurts the business more here? Slow delivery or hidden defects?

- Where does the risk sit? In the user journey, in the code, or in both?

- Will this feature face regulatory or investor scrutiny?

- How often will the implementation change over the next few months?

- Do we need to explain why the system made a decision, or only that it did?

My recommendation

Default to black-box for broad product confidence and white-box for concentrated risk. That's the most practical pattern for most SaaS teams.

Then sharpen it further:

- For MVPs: bias toward black-box.

- For critical transaction or security-sensitive logic: bias toward white-box.

- For regulated AI and high-trust workflows: blend both and make explainability a first-class requirement.

- For mature platforms: review the mix quarterly, because yesterday's fast lane often becomes today's risk hotspot.

Ask your engineering team one blunt question: “If this fails, will users notice first, or will auditors, attackers, and internal logs notice first?” The answer usually tells you where to lean.

A decision framework isn't about bureaucracy. It's about avoiding accidental strategy.

Beyond the Boxes The #riteway Delivery Mindset

White-box and black-box matter. Mindset matters more.

A poor team can misuse either approach. They can drown a product in heavyweight internal checks that don't move the business forward. Or they can ship surface-level validation so fast that everyone discovers the hidden problems in production. Methods don't create outcomes by themselves. Ownership does.

What extreme ownership looks like in delivery

The #riteway mindset is simple. The team owns the business result, not just the ticket queue.

That means engineers challenge weak assumptions. Delivery leads raise risk before it becomes delay. Product and engineering stay close enough that test strategy reflects actual commercial priorities, not just habit. When the risk profile changes, the workflow changes with it.

Proactivity beats passive execution

Passive vendors wait for instructions. Strong delivery partners ask better questions.

They ask whether a black-box-heavy MVP plan is still appropriate now that enterprise buyers are asking security questions. They ask whether a white-box-heavy testing strategy is slowing down product discovery. They ask whether an AI feature is usable at all if nobody can explain its outputs clearly.

If your team isn't doing that, it's not taking ownership. It's taking orders.

A useful lens here is the broader conversation around agentic engineering versus vibe coding in AI-era software teams. The point isn't the label. The point is whether the team operates with intention, judgment, and accountability.

Good delivery teams don't just execute a plan. They improve the plan while they're executing it.

That's the difference between shipping software and building a reliable SaaS business. White-box and black-box are tools. Extreme Ownership is the force that makes those tools produce speed, quality, and trust at the same time.

If you want a delivery partner that treats white-box and black-box decisions as business levers, not just engineering tasks, talk to Rite NRG. We help SaaS teams ship faster, tighten quality, and build investor-ready products with senior engineers, proactive delivery leadership, and the #riteway mindset of Extreme Ownership.